All these years, when we were talking about software testing, all we thought of was the testing pyramid. It was the model that defined the scope of testing, what to test, how much to test, and so on. As modern technologies and frameworks were adapted, software quality was also redefined and reinvented itself. Teams constantly search for the most effective way to ensure software quality without slowing down delivery. The latest and most influential concept that emerged from this evolution is the Testing Trophy Model.

| Key Takeaways: |

|---|

|

This article explores what the Testing Trophy Model is, why it was introduced, how it differs from the Test Pyramid, its components, benefits, and how teams can implement it effectively.

The Origins of the Testing Trophy

The Testing Trophy Model was popularized by a JavaScript educator and testing advocate, Kent C. Dodds. It was proposed as an alternative to the Test Pyramid Model and emerged primarily from real-world frustrations with how teams interpreted and applied the traditional Test Pyramid.

While the Test Pyramid is conceptually useful, it overemphasizes unit tests at the expense of meaningful integration tests. This led to systems that were “well unit-tested” but failed in real-world scenarios.

The Testing Trophy Model proposed by Kent Dodds is an updated model better suited to modern application architectures, especially front-end-heavy, API-driven systems.

What Is the Testing Trophy Model?

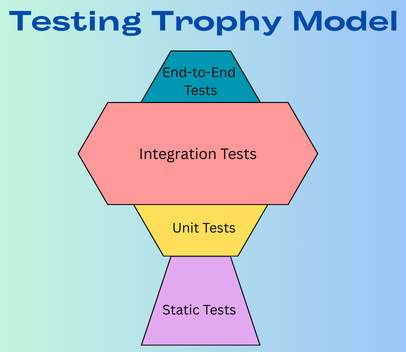

The Testing Trophy Model is a software testing strategy that emphasizes integration tests as the primary, highest-ROI testing method, rather than placing heavy emphasis on unit tests like the traditional Testing Pyramid.

- A small base of static tests at the bottom

- A moderate layer of unit tests

- A large section of integration tests

- A smaller number of end-to-end tests on top

The Trophy Model prioritizes test speed, reliability, and confidence in user-facing behavior with this arrangement.

The Testing Trophy Model visually resembles a trophy rather than a pyramid, hence the name.

The key idea behind the trophy is that integration tests should form the bulk of your testing strategy, because they provide the highest confidence for the cost.

Key Principles Behind the Testing Trophy

- Test Behavior, Not Implementation: Tests should verify “what” the system does and not “how” it is done.

- Prefer Real Dependencies When Possible: Mock only external systems like payment gateways. Avoid unnecessary mocking.

- Optimize for Confidence per Cost: Assess the cost for each test and ensure the tests provide meaningful coverage. The maintenance cost for tests should be justified.

- Maximize Return on Investment (ROI): Implement static checks and integration tests that offer quick feedback loops and maximize return on investment (ROI).

- Context-Dependent Strategy: Adjust the specific balance of tests in the Trophy Model based on project needs like criticality (e.g., business style systems may require more E2E tests), team skills (teams with strong static tests experience may rely more on that).

Structure of the Testing Trophy Model

The Testing Trophy is composed of four main components:

- Static Tests (Base layer): Consists of tools and techniques that catch errors before code execution. E.g., linters, type checkers (TypeScript), and formatting tools.

- Unit Tests (Middle layer): Tests to verify small application parts in isolation.

- Integration Tests (Largest Layer): This is the core part and contains tests that ensure different components work together seamlessly, balancing speed and confidence.

- End-to-End Tests (Top): These tests validate complete user flows and are the most realistic but also the slowest.

Let’s explore each in detail.

1. Static Tests (The Base)

- Type checking (e.g., TypeScript, Flow)

- Linters (ESLint, style checkers)

- Static code analysis tools

- Formatting tools (Prettier)

- Code quality tools

Static testing provides extremely fast feedback and prevents trivial bugs at minimal cost. Static testing improves code consistency and reduces cognitive load during reviews.

For example, a type mismatch in TypeScript code will cause a failure immediately. Similarly, ESLint will immediately identify and flag unreachable code.

2. Unit Tests (Smaller Than in the Test Pyramid)

- Pure functions

- Business rules

- Algorithms

- Utility methods

In the Testing Trophy Model, unit tests are important but not dominant. The main critique of overemphasizing unit tests is that they don’t verify how components work together and may give developers a false sense of security. Even a “well unit tested” application may fail when various components work together.

Unit tests often test implementation details and may break easily during refactoring. For example, mocking too many dependencies can lead to tests passing even when the actual system fails in production.

- Complex logic

- Edge cases

- Performance-sensitive functions

- Pure functions

3. Integration Tests (The Largest Layer)

Integration tests are the core of the Testing Trophy Model. These tests verify how multiple units work together and maximize the ROI.

According to the Trophy Model, integration tests better reflect real user behavior and catch integration bugs early. With integration tests, the system is tested more closely to how it runs in the real world. Integration tests strike a balance between speed, reliability, and realism, providing higher confidence.

Integration tests are slower than unit tests but much faster and less flaky than end-to-end tests. These features make them the sweet spot in the Testing Trophy.

- Component + state management

- API + database

- UI components interacting with services.

- Business logic interacting with persistence layers.

- A React component rendered with real context providers.

- An API endpoint is tested against a real test database.

- A microservice interacting with the actual business logic modules.

4. End-to-End Tests (Top Layer)

End-to-end (E2E) tests are the topmost layer in the Testing Trophy Model and simulate real user behavior across the entire application stack. The Trophy Model cites several reasons for limiting E2E testing. E2E tests are slower and more brittle than other tests. They also require a full system setup and can be flaky due to network, environment, or timing issues.

- Critical user journeys

- Regression testing

- Smoke testing production-like environments

- Clicking through UI flows

- Submitting forms

- Testing authentication

- Validating user journeys

Testing Trophy vs Test Pyramid

The Testing Trophy Model prioritizes integration tests and static analysis, focusing on high-ROI tests for modern, component-based applications. Test Pyramid, on the other hand, emphasizes a massive base of fast unit tests. The trophy is generally better for frontend/web apps, while the pyramid is better suited to backend systems with heavy logic.

The following table summarizes the key differences between the Testing Trophy Model and the Test Pyramid.

| Aspect | Testing Trophy | Test Pyramid |

|---|---|---|

| Structure | Static (base), Unit (middle), Integration (largest part), E2E (top) | Unit (base), Integration (middle), E2E (top) |

| Dominant Layer | Integration tests | Unit tests |

| Focus | confidence and ROI, | cost and speed |

| Emphasis | Realistic interaction | Isolation |

| Common Issue | Balanced coverage | Over-mocking |

| Real-world Fit | Modern web applications, microservices, and front-end development | Backend-heavy systems, complex business logic |

| Static Checks | Strong foundation | Not emphasized |

| Pros | Tests interactions (closer to user experience), higher confidence, faster than full E2E | Fast execution, easy to isolate failures, low maintenance |

Why the Testing Trophy Works Well in Modern Applications?

- Front-end frameworks (React, Angular, Vue)

- Microservices

- APIs

- Event-driven systems

- Cloud-native infrastructure

In these systems, many bugs may occur at integration boundaries as data flows through multiple layers. UI logic in these applications is tightly coupled to state management. :

The Testing Trophy Model directly addresses the complexities of these interconnected architectures and works well for modern applications, particularly web apps and services. It optimizes for confidence, speed, and maintainability by emphasizing integration tests that better catch real-world problems.

- High Confidence Through Realistic Testing: Unit tests can only check small code pieces. Integration tests verify that the unit-tested parts work well together. The Trophy Model also adapts a user-centric approach with the core philosophy that the more your tests resemble the way your application is used, the more confidence they will give you.

- Optimal Balance of Speed and Depth: The Trophy provides faster feedback by placing static tests at the button, allowing developers to receive instant feedback on syntax and type errors. Integration tests, though slower than unit tests, are much faster than E2E tests and provide a balance for CI/CD pipelines.

- Better ROI: Integration tests can pinpoint more critical, user-facing bugs than unit tests, providing a higher return on investment (ROI) than maintaining many isolated unit tests.

- Lower Maintenance Costs: With ever-changing UIs in modern applications, E2E tests become high-maintenance and brittle. The Trophy Model limits E2E tests to only the most high-level, critical user journeys. Integration tests do not depend on internal implementation details like unit tests, and they don’t break during code refactoring. Overall, the Trophy Model has lower maintenance costs.

- Alignment with Modern Tooling: Most modern testing frameworks make integration testing faster and more reliable. Using tools like React Testing Library, developers can test how components interact in a simulated browser environment. The Trophy Model is thus more aligned with the modern tools.

Benefits of the Testing Trophy Model

- Improved Real-World Accuracy: By emphasizing integration tests, the Testing Trophy Model validates interactions between modules, more closely replicating actual user behavior than isolated unit tests.

- Optimal Feedback Speed: The model prioritizes faster integration tests over slower E2E tests, providing developers with quick feedback. Integration tests are also easier to maintain than E2E tests.

- Reduced Maintenance Costs: The brittle, slow E2E tests are minimized in this model and used only on critical user flows. As these tests focus on behavioral testing rather than implementation details, they are less brittle. E2E tests, along with integration tests, are low-cost.

- Effective Resource Utilization: The Trophy Model ensures efforts are concentrated where they are most valuable, testing the interaction between components, rather than over-testing in isolation.

- Improved ROI: This model focuses on integration tests, which are less brittle than E2E tests and more realistic than unit tests.

- Better Confidence: The Trophy Model ensures that when individual parts change, the system as a whole still functions. Integration tests represent real user scenarios better than small unit tests.

- Suitable for Modern Apps: Ideal for modern web apps where component interaction is critical.

Criticisms of the Testing Trophy Model

- Performance and Maintenance Overhead: As integration tests are slower, it can result in slower test execution times and higher maintenance costs compared to a unit-test-dominant approach.

- “Top-Heavy” Fragility: Testers might focus too much on integration tests, leading to many complex E2E tests that run slowly and are prone to flakiness.

- Context Misapplication: If the team is not aware of the context of the model. It might be misused on projects where the traditional, unit-test-focused “test pyramid” is more efficient.

- Over-reliance on Mocking: In their effort to balance integration tests, teams might mock too many dependencies, leading to a false sense of security and testing components in isolation rather than in a realistic environment.

- Increased Complexity: Writing and debugging integration tests is more complex as they often involve API calls, databases, or browsers. It requires more effort and sophisticated tooling.

- Misunderstanding of Value: Teams may adopt the Trophy Model blindly, neglect crucial unit tests, or over-invest in tests that do not deliver high value.

How to Implement the Testing Trophy?

The Testing Trophy Model prioritizes integration tests, with a strong foundation in static analysis, fewer unit tests, and minimal end-to-end (E2E) tests. The model focuses on testing user-centric workflows to provide maximum confidence, speed, and maintainability.

Initially, the current test distribution is evaluated by measuring the percentages of unit, integration, and E2E tests. The use of static analysis is also evaluated.

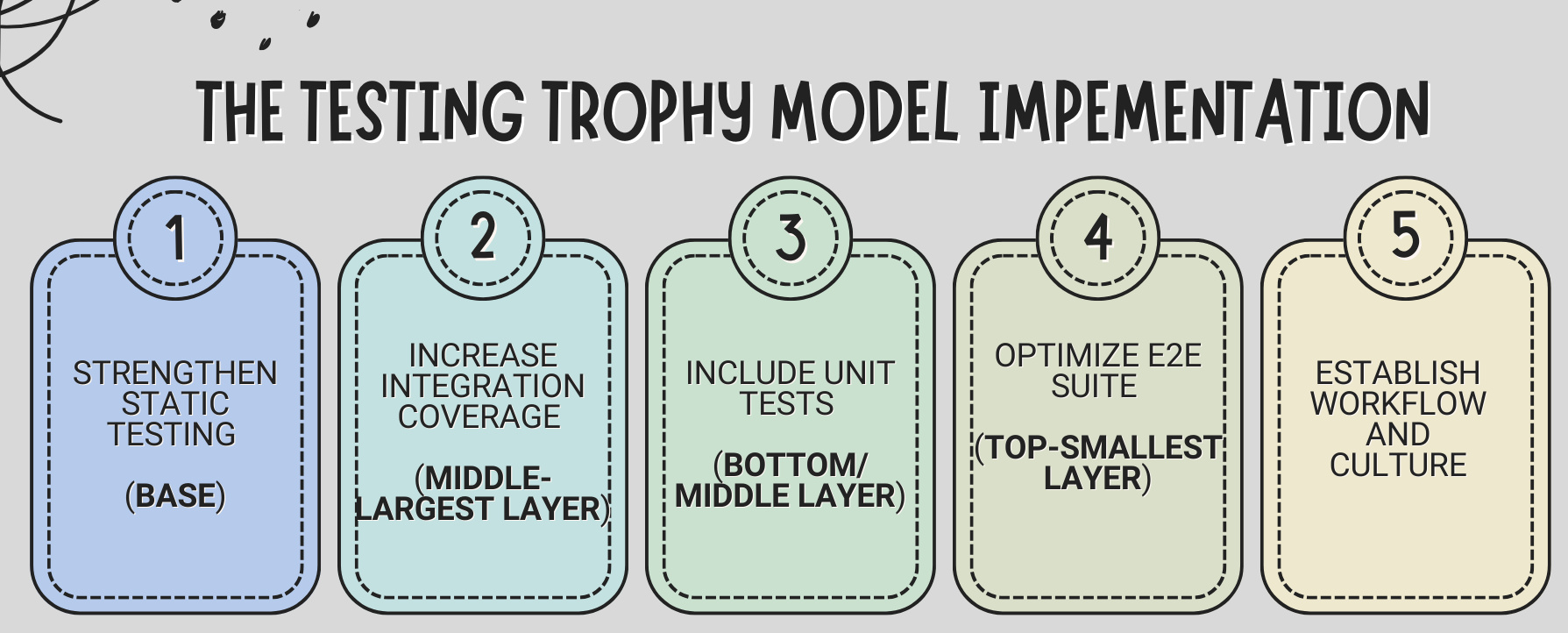

Implementation of the model involves the following steps:

Step 1: Strengthen Static Testing (Base)

- Type systems like TypeScript for type checking

- Linters (ESLint) to catch syntax errors and enforce linting rules.

- Security scanners

- Code formatters

The primary purpose of this step is to obtain fast, automated feedback before code execution that prevents simple errors.

Step 2: Increase Integration Coverage (Middle-Largest Layer)

- Tests covering real interactions to test how different components or modules work together.

- Test the business flows, database integrations, and API contracts.

- Use tools like React Testing Library to test user behavior (e.g., clicking, filling forms) rather than implementation details.

By increasing and strengthening the integration coverage, speed, and confidence are balanced, ensuring all features are working efficiently together.

Step 3: Include Unit Tests (Bottom/Middle)

- Write unit tests for isolated, complex logic, such as utility functions. Pay attention to areas where edge cases are hard to cover via integration tests.

Granular logic can be quickly verified using unit tests.

Step 4: Optimize E2E Suite (Top-Smallest Layer)

- Critical user journeys

- High-risk flows

- Smoke tests

- Use modern testing tools for critical user journeys (e.g., login, checkout)

E2E validates the entire application flow from the user’s perspective with minimal mocking.

Step 5: Establish Workflow and Culture

- Integrate all the tests (steps 1-4) into your CI/CD pipeline.

- Shift focus from 100% code coverage to testing user scenarios, prioritizing tests that offer the highest ROI in terms of confidence.

Real-World Example

Imagine an e-commerce application with a React frontend application using TypeScript. The various components of the Testing Trophy Model are as follows:

| Static Tests | Unit Tests | Integration Tests | E2E Tests |

|---|---|---|---|

|

|

|

|

Under the Testing Trophy, most automated tests would exist at the integration layer.

When to Prefer the Testing Trophy Model?

The Testing Trophy Model is best preferred for modern, complex, and interconnected applications, such as full-stack web applications, SPA frameworks, API-driven systems, and microservices. In such systems, user experience and component integration are more critical than isolated unit logic.

- Front-end and UI-Focused Projects: Applications with heavy user interaction (e.g., React, Vue, Angular), ensuring the UI works correctly is important.

- High Interdependency: Components are deeply interconnected, requiring tests that verify their interactions rather than just their isolated functionality.

- Faster Development Cycles: When teams need faster, more reliable feedback, and also more confidence. Integration tests can provide this.

- Focus on User Workflows: When real-world, end-to-end user scenarios are to be tested without the maintenance overhead of full end-to-end tests.

- Modern JavaScript/TypeScript Stack: Tools like Testing Library, when used, mimic the user behavior.

Testing Trophy in CI/CD Pipelines

The Testing Trophy in CI/CD prioritizes integration tests over unit tests to achieve a balance of speed, confidence, and maintenance.

- Static checks are run first (may take seconds).

- Unit tests are executed next (fast).

- Integration tests are run in parallel.

- E2E tests are scheduled in staging or nightly builds.

- Quick feedback for developers

- High confidence before deployment, with integration tests that ensure real-world scenarios work

- Fewer brittle E2E tests means reduced maintenance

Summary

The Testing Trophy Model redefines the test distribution for modern software systems. It promotes a balanced approach with integration tests as the core of the testing strategy and static tests as the foundation.

The model combines strong static analysis, targeted unit tests, extensive integration tests, and strategic end-to-end coverage, ensuring teams achieve high confidence at manageable maintenance costs.

The key takeaway from this model is the philosophy:

Test what matters most, at the level that provides the best confidence-to-cost ratio.

Frequently Asked Questions (FAQs)

- What is the Testing Trophy Model in software testing?

The Testing Trophy Model is a modern testing strategy that emphasizes integration tests as the most valuable type of automated testing. It has static tests as its base layer, a balanced number of unit tests, a large portion of integration tests, and a smaller set of end-to-end (E2E) tests at the top.

- Why does the Testing Trophy emphasize integration tests?

Integration tests verify how different components or modules of the system work together. In modern applications, especially web apps and APIs, many issues occur at integration points. Integration tests offer a better balance between speed, reliability, and real-world confidence.

- Are unit tests still important in the Testing Trophy Model?

Yes, unit tests are still important, especially for complex business logic and pure functions. However, this model discourages over-reliance on unit tests that focus too much on implementation details.

- When should you use end-to-end (E2E) tests?

E2E tests should be used for critical user journeys, smoke tests, and high-risk workflows. Since they are slower and more brittle, the Testing Trophy recommends keeping them limited but strategic.