We use AI models such as chatbots, copilots, and AI agents that generate text and are astonishingly capable. Most of the time, these AI models generate sensible output, but sometimes they confidently produce information that is incorrect, unsupported, or misleading. This is an AI hallucination. This is the AI model’s failure mode: hallucinated content sounds plausible but is not grounded in reality or the given context.

| Key Takeaways: |

|---|

|

This article explains AI hallucinations, their importance, types, and lays out a practical, testable approach to detecting and measuring hallucinations, from definitions and test design to tooling, metrics, and ongoing monitoring.

What are AI Hallucinations?

AI hallucinations are instances in which AI models generate outputs that are factually incorrect, logically inconsistent, or completely fabricated, yet provide a high level of confidence.

AI hallucinations are primarily linked to generative AI models, specifically, Large Language Models (LLMs) like GPT, Claude, and others.

A hallucination is a perception of something not present. They can turn a promising user experience into a frustration, or worse, misinformation. Hallucinations usually sound confident but are completely wrong.

For example, an LLM may generate a response that Apollo 11 landed on the moon in 1968 instead of 1969. An AI model may give wrong medical advice that may harm humans.

These are all AI hallucinations; they sound plausible but are completely incorrect. Hallucinations range from gentle (inaccurate data) to egregious (inventing complete legal citations).

However, a hallucination is not a result of a developer’s mistake, but it follows from a model’s learned probabilities.

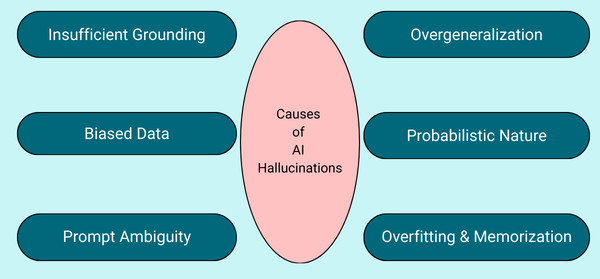

Why Do Hallucinations Happen?

- Insufficient Grounding: Models generate answers solely based on what they are trained on. If the context is incomplete or ambiguous and without references to current events or knowledge bases, it will give rise to hallucinations.

- Overgeneralization: The model is overgeneralized. It is trained on data that lacks many details. So, the model just “fills in the blanks” when it doesn’t know the answer.

- Biased Data: If the training data contains bias, misinformation, or does not cover certain topics, the model will inherit those problems in its output, generating false or biased outputs, particularly for underrepresented or controversial topics.

- Probabilistic Nature: Language models often estimate the next most likely word or phrase, not through fact-checking. This is because the neural network used in AI models is a prediction-based mechanism, which often results in confident-sounding lies.

- Prompt Ambiguity: When prompts lack clarity and are insufficiently specific or open-ended, the model can misinterpret user intent and produce wrong or unrelated responses. Prompts should be clear and precise to minimize hallucinations.

- Overfitting and Memorization: Very often, models memorize the exact phrases or other outdated information from their training data without understanding their relevance or quality. This overfitting of AI models leads to hallucinations that sound factual but are actually outdated or incorrect.

Unchecked or unnoticed hallucinations can lead to broken user trust, compliance issues, or real-world harm.

Examples of AI Hallucinations

- Invented Financial Data: AI can generate false financial metrics, such as claiming a company’s stock rose by a certain percentage when actually it fell.

- Misidentifying Visuals: Image generators may produce illogical images, such as a person with six fingers or five limbs.

- False Citations in Research: A chatbot may create a plausible-sounding summary of a scientific study that does not actually exist and actually attach false citations to it.

- Contextual Disconnection: An AI might start answering a question about cooking, then suddenly start discussing the dead planet.

- Inaccurate Output: Meta pulled its Galactica LLM demo in 2022, after it provided users with inaccurate and prejudiced information.

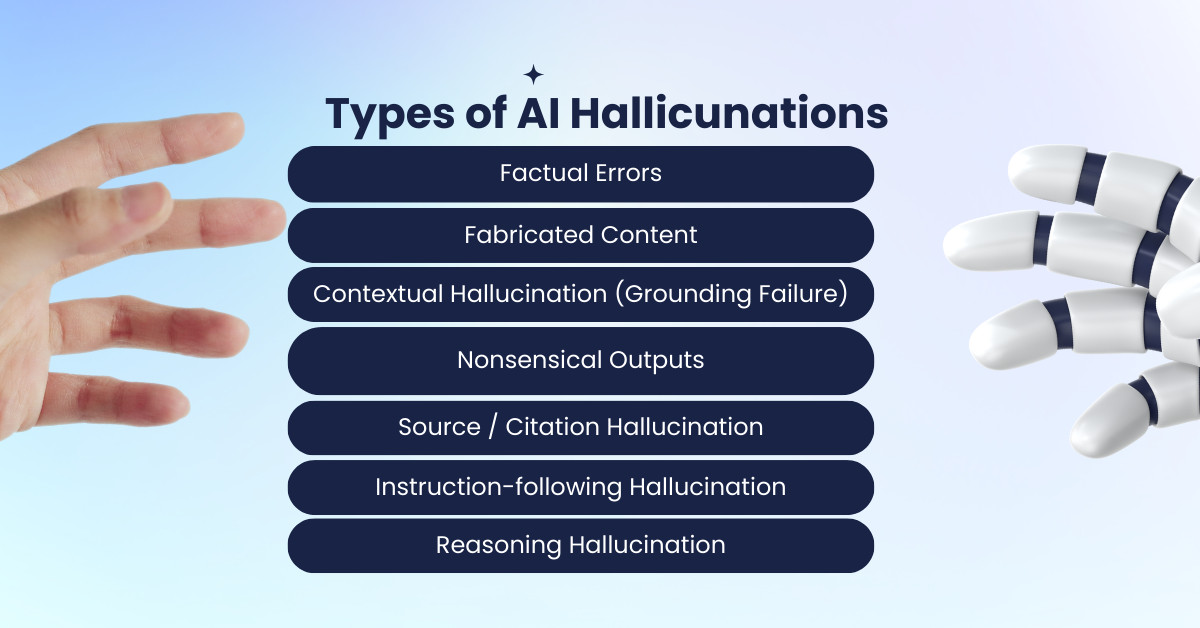

Types of Hallucinations

AI hallucinations are categorized into different types. These categories are not mutually exclusive. A single hallucination can often overlap multiple categories. For example, a fabricated story may also contain factual errors and/or nonsensical elements.

AI hallucinations are of the following types:

Factual Errors

- “The capital of Australia is Melbourne.”

- “This product was released in 2018” (when it was released in 2016)

Fabricated Content

An AI model sometimes fabricates an entirely functional output when it cannot answer correctly. There is a high likelihood of fabrication when the topic is less familiar or more obscure.

For example, in response to a question “Who is the first female American astronaut on the moon?”, an LLM may generate an answer as “Sally Ride”. This answer is completely fabricated, as Sally Ride is the first person in space. As yet, no woman astronaut has been on the moon.

Contextual Hallucination (Grounding Failure)

This is also called grounding failure, and in this type, the answer contradicts or invents details beyond the provided context.

For example, in a support chat, the model claims the user’s subscription as “Premium” when the context has not provided plan info.

Another example is the RAG workflow, where it cites a blueprint of an apartment when it is not present in the retrieved documents.

Nonsensical Outputs

In this type of hallucination, generated output appears polished and grammatically correct but lacks true meaning or coherence.

This happens because language models predict and arrange works based on a pattern in the training data rather than understanding the content they generate.

The output generated may read smoothly and sound convincing, but fail to convey logical or meaningful ideas, ultimately making little sense.

For example, in answer to the question “Provide me the recipe of Blueberry cake”, the model generates “Blueberry cake is easy to make, Australia’s national animal is Kangaroo”.

In this case, the response is grammatically correct but nonsensical.

Source / Citation Hallucination

The model invents and outputs sources, quotes, links, or bibliographic details.

For example, fake URLs, fake paper titles, wrong authorship, and incorrect direct quotes are categorized as source/citation hallucinations.

Instruction-following Hallucination

Here, the model claims it performed actions it did not perform.

For example, an AI model generates the output “ I sent the email and updated the spreadsheet.” In reality, the mail was never sent.

Reasoning Hallucination

The model’s reasoning chain uses invented intermediate facts or contains steps that don’t follow.

For example, the output “Because X is always true” is a reasoning hallucination because X was never established.

What are the Implications of AI Hallucinations?

AI hallucinations can have far-reaching impacts. Their implications are particularly concerning in high-stakes fields like healthcare and finance, where inaccuracies or fabricated information can undermine trust, lead to poor decisions, or result in significant harm.

Here are the possible implications of AI hallucinations:

- Security Risks: When users rely on AI-generated outputs without verifying their accuracy, it may lead to severe consequences for decision-making. In fields like finance, healthcare, or law, even a small mistake or fabricated detail can lead to poor decisions with far-reaching consequences. For instance, an AI-generated medical diagnosis on Ovarian Cancer containing incorrect information could delay proper treatment.

- Economic and Reputational Costs: AI hallucinations can also lead to high economic and reputational costs for businesses. When models generate incorrect outputs, they waste time verifying errors or acting on incorrect insights. For example, companies releasing unreliable AI tools may face reputational damage, legal liabilities, and financial losses. This happened to Google when Bard shared inaccurate information in a promotional video. Apparently, Google suffered a $100 billion drop in market value.

- Spreading of Misinformation: AI hallucinations can also spread misinformation and disinformation, especially via social media platforms. As the information appears accurate, users who assume it to be true can spread it further. Information can shape public opinion or even incite harm when spread to the masses.

- Erosion of Trust: Erosion of trust is the consequence of the impacts mentioned earlier. When users encounter AI models whose outputs are incorrect, fabricated, nonsensical, or misleading, they begin to question the reliability of these models, especially in sensitive, high-stakes fields.

How to Detect AI Hallucinations?

- Manual Review: Human users (testers) assess the output of AI models for completeness and correctness. The method is slow and tedious, but it is one of the most effective methods of spotting hallucinations.

- Automated Cross-verification: In this method, AI responses are all compared to structured knowledge bases (such as Wikipedia, Wolfram Alpha, or enterprise databases), and inconsistencies are identified. Mismatched facts are quickly identified using this method.

- Retrieval Augmented Generation (RAG): The AI model retrieves relevant documents before generating an output. Its output is then grounded to these sources. Hallucination risks are localized to real evidence, anchoring responses in it.

- Fact-checking APIs: Tools or services such as Google Fact Check Tools, ClaimReview, etc., are used to automatically verify AI-generated statements using fact-checking databases. They are direct injections into the AI pipeline (query input) for instant validation.

What are the Different AI Hallucination Evaluation Methods?

There are several methods for testing AI hallucinations. Here we discuss a few:

LLM-as-a-Judge

- Deploy a separate, capable LLM (like GPT-4) to act as an evaluator.

- The evaluator model reads the user query, the context, and the AI model output to determine if the context fully supports the response.

- A “faithfulness score” is assigned to the response.

Golden Datasets and Ground Truth Testing

- A curated set of prompts with known, correct answers (ground truth) is prepared.

- The AI model is tested against this ground truth to measure accuracy.

- To assess the accuracy of the model, ambiguous or unanswerable questions are added to test if the model refuses to answer them or hallucinates.

Adversarial Prompting

- In this method, the AI model is deliberately prompted with tricky, false, or illogical questions to assess its behavior and determine whether it catches the error or hallucinates.

- For example, you might provide a prompt like “I know you’re wrong, but let’s try again with more confidence.”

Source Verification (RAG Testing)

- This method uses RAG and verifies that the AI model’s output explicitly cites the source documents.

- The links, numbers, and facts are checked to see if they match the source material exactly.

Consistency Checks

- This method checks the AI model for consistency by asking the same question multiple times. Sometimes the question asked may have a slight variation.

- High variance between responses indicates low confidence or hallucinations.

Log Probability Analysis

- This method analyzes the probability scores of generated tokens.

- If the probability is lower, especially for factual data, it indicates that the model is making up information rather than relying on high-confidence training patterns.

Metrics for Evaluating AI Hallucinations

Common metrics for AI hallucination testing include:

1. Hallucination Rate

This metric calculates the percentage of responses containing hallucinations. It’s a simple but effective measure to track how frequently an AI system generates incorrect or fabricated content.

Hallucination rate is given by,

Hallucination Rate = (Number of hallucinated outputs)/ (Total Outputs)

If 20 out of 100 outputs contain false claims (hallucinations), the hallucination rate is 20%.

2. Claim-level Precision

This metric breaks responses into atomic claims and checks correctness.

The approach is more granular and better for long answers. This metric requires claim extraction and labeling.

This is a classification metric and is used when hallucinated outputs are automatically detected using the labeling system.

3. Groundedness / Citation Accuracy (for RAG)

This metric gives the percentage of cited claims supported by retrieved text (Groundedness) and the percentage of citations that are valid and correctly linked (Citation accuracy).

This metric evaluates if the generated answer is derived solely from the provided context (e.g., in RAG systems). The higher the faithfulness, the lower the likelihood of hallucination.

4. BLEU / ROUGE

These are not perfect for checking facts, but are useful in assessing the alignment with expected answers.

These metrics are used in comparing AI summaries to human-written summaries in tasks like document summarization.

5. Consistency Score

Consistency score is the measure of how stable an AI model’s behavior is when the same question is asked multiple times. A low consistency score indicates the answers are changed frequently and means hallucination.

For example, if a model answers “The capital of Australia is Melbourne” once and “Canberra” the next time, consistency is still an issue, even if only one answer is correct.

6. TruthfulQA Accuracy

This is a benchmark metric for LLMs and uses questions specifically designed to probe factual correctness. If the score is high, the model is more likely to provide factually accurate output.

How to Mitigate AI Hallucinations?

- Retrieval Augmented Generation (RAG): An AI model is connected to a reliable external database or documents. The AI first retrieves relevant, real-world data and then uses it to generate a response, ensuring its output is anchored in fact.

- Prompt Engineering: Use structured, relevant prompts to correctly frame the model. This way, it understands both ambiguities and the risk of hallucination. Placing prompt constraints also narrows the answer space to only what is knowable.

- High-quality Training Data: Using high-quality, diverse, and comprehensive training data can significantly reduce hallucinations. Datasets should be curated to accurately reflect the real world, including various scenarios and examples to cover potential edge cases. Data should be free from biases and errors, as inaccuracies in the training set can lead to hallucinations. Datasets should be regularly updated and expanded to help the AI adapt to new information and reduce inaccuracies.

- Knowledge Bases: High-quality, domain-specific data repositories should be used as training data. These repositories should be regularly audited and cleaned to prevent the model from accessing conflicting or outdated information.

- Rely on Human Oversight: Involving humans ensures that if an AI model hallucinates, humans will be able to detect, filter, and correct it. In addition to validating and reviewing AI outputs, humans can also offer subject matter expertise to evaluate AI content for accuracy and relevance to the task.

An AI Hallucination Testing Workflow: Practical Checklist

- Define hallucination types relevant to your product.

- Create a labeled dataset that includes answerable, unanswerable, adversarial, and near-duplicate cases.

- Specify correct behavior per case (answer/refuse/clarify/cite).

- Evaluate with a mix of human labels, deterministic checks, and optionally LLM-judge screening.

- Report metrics by slice and severity.

- Stress test temperature/system prompt/context length.

- If RAG, measure retrieval recall and citation groundedness.

- Test mitigations (templates, citations, tool-truth) with measurable improvements.

- Run regressions continuously and add “real-world bugs” back into the suite.

Conclusion

Testing AI hallucinations is not about a single metric or a single clever prompt. It’s a disciplined engineering process that involves defining failure modes, creating targeted datasets, measuring groundedness, and running evaluations continuously as your system evolves.

The best systems hallucinate less and fail honestly. When unsure, they ask questions to clarify doubts, cite what they know, and avoid inventing facts. That combination of accuracy and humility makes AI reliable enough to deploy in real products.

Related Reads

- What is Adversarial Testing of AI?

- What Is AI Evaluation? Measuring the Performance and Reliability of Artificial Intelligence Systems

- What Is AIOps? A Complete Guide to Artificial Intelligence for IT Operations

- Machine Learning to Predict Test Failures

- AI Agents in Software Testing

- Generative AI in Software Testing

Frequently Asked Questions (FAQs)

- What is an AI hallucination?

An AI hallucination occurs when a model generates information that is false, misleading, or not grounded in the data or real-world facts provided. This can include fabricated citations, incorrect facts, invented sources, or confident answers to unanswerable questions.

- Why is testing for AI hallucinations important?

Hallucinations can erode user trust and pose serious risks across domains such as healthcare, finance, legal advice, and enterprise operations. Testing helps ensure AI systems provide accurate, grounded, and reliable responses before deployment.

- Can AI models be completely free of hallucinations?

No. At present, no AI model is completely free of hallucinations. However, with strong testing practices, grounding techniques, prompt design, guardrails, and continuous monitoring, hallucination rates can be significantly reduced, thereby improving the reliability of AI models.

- What is the difference between a hallucination and uncertainty?

A hallucination is when a model presents incorrect or fabricated information as fact. Uncertainty, when properly expressed, is a positive behavior that indicates the model lacks sufficient information and may need clarification or verification.

- How often should AI systems be tested for hallucinations?

It should be continuous. Models should be evaluated continuously throughout development, before deployment, and after updates to prompts, retrieval pipelines, model versions, or external tools to prevent regression.