We have a straightforward question: Where should we put most of our testing effort? Over time, researchers have introduced novel structural forms for how testing can be spread over layers within an application.

Of all of these models, the Ice Cream Cone Model is different. Not because it is meant to be an ideal, but because it identifies a shared anti-pattern in contemporary testing techniques. The Ice Cream Cone Model explains a testing structure that is largely dependent upon UI or end-to-end tests, with very few unit tests at the foundation.

This may look like a better option, but it does come with instability, maintenance overhead, slow feedback loops, and brittle test suites.

| Key Takeaways: |

|---|

|

Distribution Models in Testing

Current software systems are constructed in layers, including business logic, APIs, databases, user interfaces, and external integrations. However, as each of these layers behaves differently and assumes varying degrees of risk or complexity, testing approaches need to be carefully distributed across them.

Distribution models exist to visually represent the allocation of testing efforts across these different layers. They assist the team to consider not only the volume of tests but also the cost, speed of feedback, amount of maintenance, and overall risk coverage. One of the most popular models is the Test Pyramid, which relies on a more robust foundation of unit testing and fewer high-level UI tests.

The Ice Cream Cone Model, on the other hand, reverses this structure, heavily promoting a greater number of UI tests on a smaller unit testing base, often resulting in slower feedback and more maintenance overhead.

What is the Testing Ice Cream Cone Model?

The Ice Cream Cone Model is like an upside-down pyramid of testing structures with very few unit tests forming a narrow base. Above them are some integration tests, while the bulk of them are UI or end-to-end tests, resembling a large scoop of manual tests resting on a thin cone. Such a model very seldom emerges as an intentional strategic choice among engineering teams.

Read: What is the Testing Pyramid?

In truth, however, these can slowly evolve along with organizational habits, legacy constraints, the limitations of tooling, and gaps in testing expertise. It is characterized by dependency on full-system testing, limited unit coverage, delayed feedback cycles, and fragile test behavior. Since defects are harder to isolate, and the cost of maintenance grows over time, the Ice Cream Cone Model is considered an anti-pattern in modern software testing.

Why Do We Need the Ice Cream Cone Model?

- Rapid Product Development: When teams are under pressure to release features quickly, writing UI tests may feel like the fastest way to validate behavior. Instead of isolating business logic and building proper unit tests, teams write automation scripts that simulate user flows. This produces quick validation but builds technical debt. Read: What is Test Debt?

- Legacy Systems Without Testability: In monolithic or tightly coupled systems, writing unit tests can be difficult. If code is not modular, dependency injection is absent, or business logic is embedded in UI layers, writing proper unit tests becomes painful. Teams default to higher-level testing because it is easier to test the system as a whole rather than refactor the architecture.

- Skill Gaps in Unit Testing: Some teams have strong QA automation experience but limited exposure to unit testing best practices. As a result, test coverage accumulates in UI automation instead of developer-level tests.

- Organizational Silos: When development and QA teams are separated, developers may focus on delivering features while QA builds end-to-end automation suites. If developers do not take ownership of unit testing, the foundation remains weak.

- Overconfidence in End-to-End Testing: There is often a misconception that UI tests provide “real” validation because they simulate actual user behavior. While valuable, over-reliance on them creates a false sense of security.

Read: What is the Inverted Test Pyramid? Why Avoid It.

Ice Cream Cone Testing Structure

The Ice Cream Cone Model is exposed most overtly when we look at how the testing effort is organized at each layer. Its imbalance gets seen in the narrow foundation, underdeveloped middle, and unevenly weighted upper layer.

Narrow Base: Minimal Unit Tests

Unit tests are used to verify certain smaller instances of logic (e.g., for a database) as independent from one another, so that the business rules and logic to which they are embedded will behave appropriately in the real world. In the Ice Cream Cone Model, however, unit tests are sparse. Critical logic is poorly examined in its raw form and is thus insufficiently assessed at the code level.

Consequently, edge cases often remain undiscovered, and regression bugs can be seen repeatedly. As the underlying structure is very weak, defects injected in the code are generally discovered much later during UI or system-level testing, where they cost more to diagnose and repair.

Read: Guide to Unit Testing – From Basics to Best Unit Testing Tools.

Thin Middle: Limited Integration Tests

Integration tests attempt to test this type of interaction to verify the way that components interact, for example, service-to-service communication and API contracts. This layer exists in this model, but is shallow, with variable API validation, and a lack of practice using effective mocking strategies.

As a result, data integrity issues and interactions that fail are typically discovered relatively late in the testing process. There’s no strong integration coverage, so defects arising from collaboration between components get passed through until they arise on a higher-level test.

Read: Integration Testing Guide: Top 5 Tools You Need to Know.

Heavy Top: Large UI Manual Test Suite

In the Ice Cream Cone Model, most of the validation effort happens at the UI level, but primarily through manual testing rather than automation. Teams depend heavily on manual regression cycles, end-to-end user flows, and full system validation executed by QA engineers before every release.

- Time-consuming: Full regression cycles take days or even weeks.

- Resource-intensive: Requires large QA teams and repeated effort for every release.

- Difficult to scale: As the product grows, manual coverage becomes harder to maintain.

- Prone to human error: Repetition can lead to missed edge cases or inconsistent execution.

- Slow feedback loop: Developers receive defect feedback late in the cycle, increasing rework cost.

Because most validation occurs at the top layer, lower-level safeguards like unit and component tests remain underdeveloped. This makes the system fragile, slows down release velocity, and increases long-term maintenance risk.

Read: Manual Testing Cheat Sheet

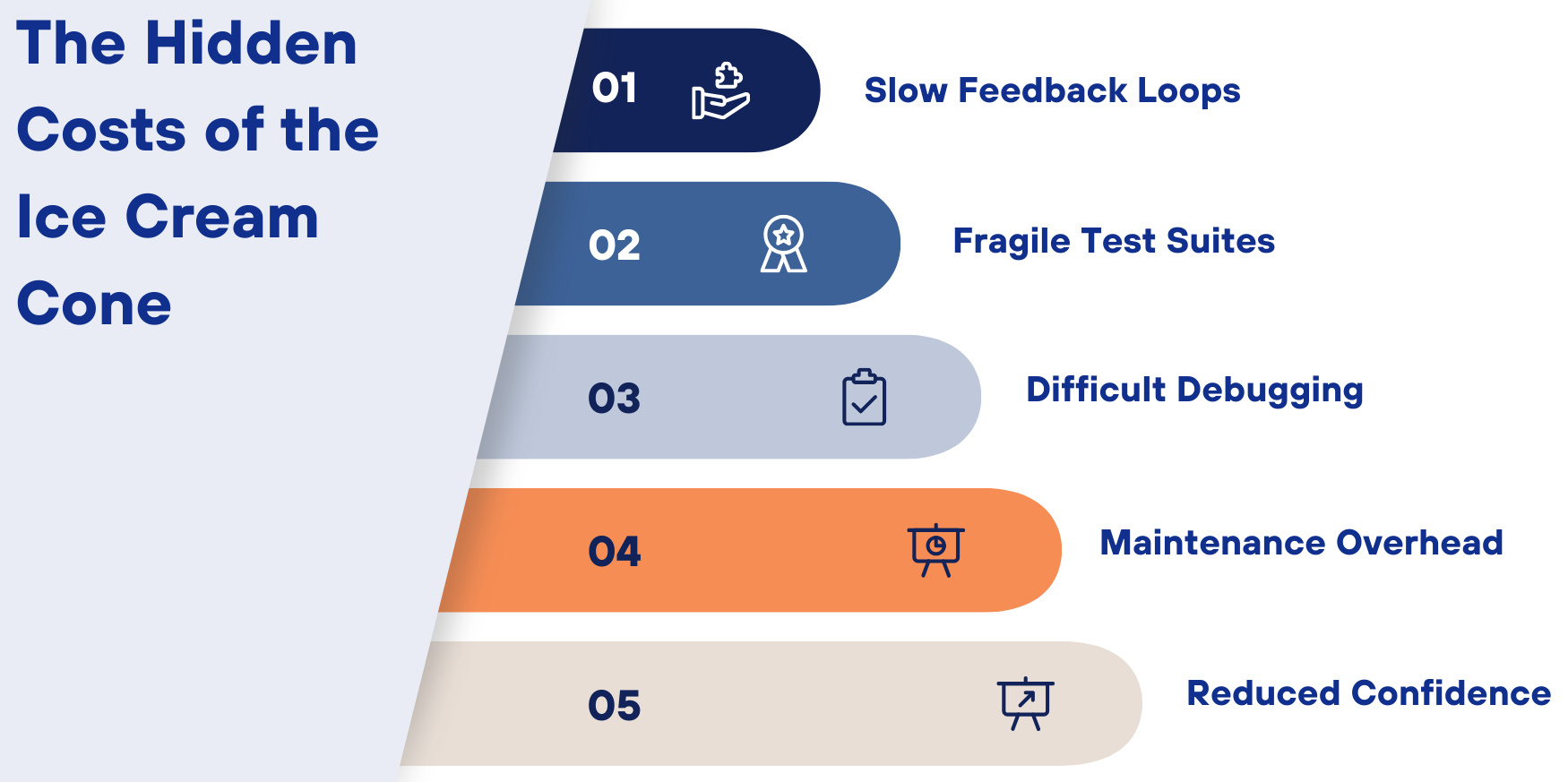

The Hidden Costs of the Ice Cream Cone

The Ice Cream Cone Model poses structural and operational risks that are cumulative over time. It is an imbalanced approach that slows the pace of development, induces instability, and lowers the overall confidence in the test suite.

- Slow Feedback Loops: Unit tests work quickly and identify defects at the code level in real time. UI tests are therefore slower because they rely on rendering layers, network calls, databases, browser automation, and environment setup. When most validation occurs at the UI layer, the feedback cycles become significantly longer.

- Fragile Test Suites: UI testing is sensitive to layout changes, timing changes, updates to CSS, slight refactoring, and asynchronous loading behavior. Simple non-functional modifications will lead to multiple unrelated testing failures. Such fragility generates noise in the test suite and weakens its reliability.

- Difficult Debugging: If a UI test fails, it’s complicated and takes considerable time to find its root cause. Business logic errors, improper data configuration, unstable API responses, timing inconsistencies, or infrastructure issues are all possible causes. Without strong unit test coverage to isolate logic, failures must be traced through multiple layers of the system.

- Maintenance Overhead: Large UI test suites require ongoing maintenance as interfaces change. Even minor UI changes can necessitate massive changes for different test cases. As the system expands, it can take more effort to keep automation alive than the need to build new features.

- Reduced Confidence: Despite the large number of UI tests in the Ice Cream Cone Model, this does not necessarily give confidence to release more. Frequent and unpredictable failures cause teams to lose faith in the automation suite. When flaky tests are ignored and false positives persist, trust in the testing process wanes.

Read: Sustainability in Software Testing.

Issues in Architecture

The Ice Cream Cone Model is not just a testing bias. But it is often a sign of deeper architectural weaknesses. Its construction often shows design choices that prevent concentrated lower-level testing or make it challenging.

- Dependency and Coupling: When system components are closely linked, isolating individual units for testing becomes difficult. Teams then flock towards system-level or UI tests because they look simple to code. However, they’re massively more expensive and time-consuming.

- Separation of Logic: If your business logic is in the UI controllers or the frontend layer, isolating validation logic becomes hard. This forces teams to indirectly validate core functionality through end-to-end flows rather than focused, concise/efficient unit tests.

- Lack of Dependency Injection: Without proper abstraction layers and dependency injection, it is hard to mock external services and dependencies. As a result, developers are using far more full-stack tests; once again, this reinforces the Ice Cream Cone anti-pattern and exposes additional structural limitations.

Read: A Guide to Maximizing Your Test Automation ROI.

Ice Cream Cone Model vs. Testing Pyramid

The Ice Cream Cone Model is considered an anti-pattern because it is opposite to the balanced principles of the Testing Pyramid. Let us review a direct comparison to see how each model approaches test distribution, cost, speed, and stability. This difference makes it clear why one promotes long-term efficiency while the other creates risks.

| Testing Pyramid | Ice Cream Cone Model |

|---|---|

| Large number of unit tests, fewer integration tests, minimal UI tests | Few unit tests, some integration tests, and a large number of UI tests |

| Fast feedback due to quick unit test execution | Slow feedback because most tests run at the UI level |

| Easy to pinpoint issues at the code level | Difficult to isolate root causes due to full-stack failures |

| Lower maintenance due to stable, isolated tests | High maintenance because UI tests frequently break |

| Highly stable and deterministic | Flaky and environment-dependent |

| Short execution cycles | Time-consuming test suites |

| Scales efficiently as the codebase grows | Becomes fragile and expensive over time |

| Issues caught early in development | Defects are found late in the testing cycle |

| High confidence from layered validation | Reduced confidence due to unpredictable failures |

| Clear validation at each layer | Blurred boundaries with over-reliance on end-to-end tests |

Conclusion

We can consider the Ice Cream Cone Model as an anti-pattern in software testing. In this model, the tests are more at the UI or end-to-end level, with a very few unit and integration tests. This may sound practical, but this architecture causes slow feedback, brittle automation, and high maintenance. Gradually, a loss of confidence about releases seeps in within the team. To deliver continuous quality and scalability, teams can revisit their testing strategy towards foundational unit coverage and a well-layered validation.