You can say Artificial Intelligence (AI) is doing pretty well these days. It has not only evolved tremendously, but it has also become deeply embedded in modern society, influencing decisions in healthcare, finance, hiring, education, and even criminal justice. However, while AI’s influence is steadily increasing, there are still challenges it faces, among which a big, persistent thorn is AI bias.

| Key Takeaways: |

|---|

|

This article explores what AI bias is, its causes and types, real-world implications, and detailed methods for testing and mitigating it.

What is AI Bias?

AI bias, also known as algorithmic or machine learning bias, refers to systematic, unfair discrimination in an AI system’s outputs.

It is the occurrence of biased or prejudiced results due to human biases that distorts the original training data or AI algorithm. This leads to distorted outputs and potentially harmful outcomes.

A bias is typically not intentional but emerges from the data, design, or assumptions used during the development of the AI system. Since AI models use historical data to learn patterns, existing societal biases present in that historical data are often inherited.

For example:

- A hiring algorithm trained on past company data will favor male candidates for a particular position if there is a trend in the historical data that more men were hired.

- As you train a facial recognition system on lighter-skinned datasets, it may perform poorly on darker skin tones.

In essence, if the input data or design is biased, the output of the AI model will be biased too.

Unaddressed AI bias reduces an AI model’s accuracy and its potential. It can adversely impact an organization’s success and hinder people’s ability to participate in the economy and society.

AI bias could create mistrust among people of color, gender, people with special needs, or other marginalized groups.

According to The Wall Street Journal, “As use of AI becomes more widespread, businesses are still struggling to address pervasive bias.”

Why AI Bias Matters

AI bias, if not detected and addressed on time, amplifies harmful stereotypes, continues discrimination, and reinforces existing societal inequalities in areas like hiring, lending, and healthcare. Biased systems generate unfair, inaccurate, and unsafe outcomes, causing legal risks for businesses and social harm to marginalized groups.

- Perpetuate Discrimination: AI systems that are trained on biased data can discriminate against individuals based on race, gender, or socioeconomic status. For example, hiring systems may penalize women, or AI may provide lower credit scores to minorities.

- Reinforce Stereotypes: Biased models can solidify prejudices and inequalities, such as associating specific professions with only one gender or race.

- Put Business at Risks: Flawed, biased AI systems face reputational damage, loss of trust, and potential legal or ethical consequences, risking the entire business.

- Create Systemic Inequity: Unfair historical data on which the AI model is trained can dictate future opportunities, limiting inclusion and fairness in society, leading to inequality.

- Drive Unsafe Healthcare Decisions: In medicine and healthcare systems, biased diagnostic tools may lead to incorrect treatment plans for certain demographic groups, causing severe health safety risks.

As AI becomes more influential, ensuring fairness and eliminating bias is not optional; it is critical.

Types of AI Bias

AI bias can enter the system at multiple stages of the AI lifecycle. Here are the common types of AI bias:

- Data Bias: Training data for the AI model is the most common source of bias. If the training data is inaccurate, incomplete, unbalanced, or unrepresentative, the AI model will generate distorted, skewed output.

For example, if an AI model is trained mostly on urban data, it may not perform correctly in rural contexts.

- Sampling Bias: When certain sample groups are underrepresented or overrepresented in the dataset, a sampling bias occurs. In this case, the data used to train the model is not large enough, not representative enough, or too incomplete. As a result, the model is not sufficiently trained.

For example, if an AI model is trained on school teachers with the same academic qualifications, then any future teachers considered for this model will need to have the same academic qualifications.

- Algorithmic Bias: The model’s design may favor certain outcomes due to assumptions or optimization choices. If incorrect questions are asked or if the feedback to the machine learning algorithm does not guide the search for a solution, it can result in misinformation.

- Human Bias: This is also known as cognitive bias and seeps into the system through subjective decisions in data labeling, model development, and other stages of the lifecycle. Human biases in AI models reflect the prejudices and cognitive biases of the individuals and teams involved in developing the AI technologies.

- Confirmation Bias: Developers may unintentionally reinforce their expectations while designing AI systems. In this way, it will double down on the existing biases as AI relies too much on pre-existing beliefs or data trends. In this case, the AI model is unable to identify new trends or patterns.

- Feedback Loop Bias: Once an AI model is deployed, biased outputs can reinforce themselves over time.

For example, a recommendation system showing only popular content may have bias at any stage, from data collection to development.

- Exclusion Bias: This type of bias occurs when important data is excluded from the data being used. This happens because the developer has failed to see new and important factors.

- Measurement Bias: Incomplete data, caused by an oversight or lack of preparation, results in a dataset not including the whole population that should be considered.

For example, if a college wanted to predict the factors for successful graduation, but included only students who graduated, the outcomes would completely miss the factors that cause some students to drop out.

- Out-group Homogeneity Bias: People seem to feel they have a better understanding of ingroup members or the group to which they belong, and think they are more diverse than the outgroup members. As a result of this attitude, the algorithms written by developers who are less capable of distinguishing between individuals who are not part of the majority group in the training data lead to racial bias, misclassification, and incorrect answers.

- Stereotyping Bias: When AI systems inadvertently reinforce harmful stereotypes, stereotyping bias occurs.

For example, a language translation system could associate some languages with certain genders or ethnic stereotypes. However, if you attempt to remove the classes or attributes that make the algorithm biased, it may affect the overall model understanding and the outcomes.

Real-World Examples of AI Bias

- Facial Recognition Errors: AI systems from major tech companies exhibit higher error rates for darker-skinned women than for lighter-skinned men.

- Hiring Tools: AI models may favor resumes from certain demographics in a hiring system. In 2018, Amazon had to abandon a recruiting tool that penalized resumes containing the word “women” because it had been trained on a decade of male-dominated hiring.

- Loan Approvals: In financial services, such as loan approvals, biased risk models can affect minority groups. Apple Card’s AI was under investigation for providing lower credit limits to women (gender bias), including in cases where wives had better credit scores than their husbands.

- Healthcare Systems: A widely used US healthcare algorithm showed racial bias. The model was trained on medical cost history (rather than actual need) as a proxy for health.

- Image Generation: Image generation tools such as Midjourney and Stable Diffusion associate high-profile jobs like “CEO” with white men and create stereotypical, sexualized, or marginalized images of women and minorities.

All these examples highlight how bias can lead to unfair and harmful decisions.

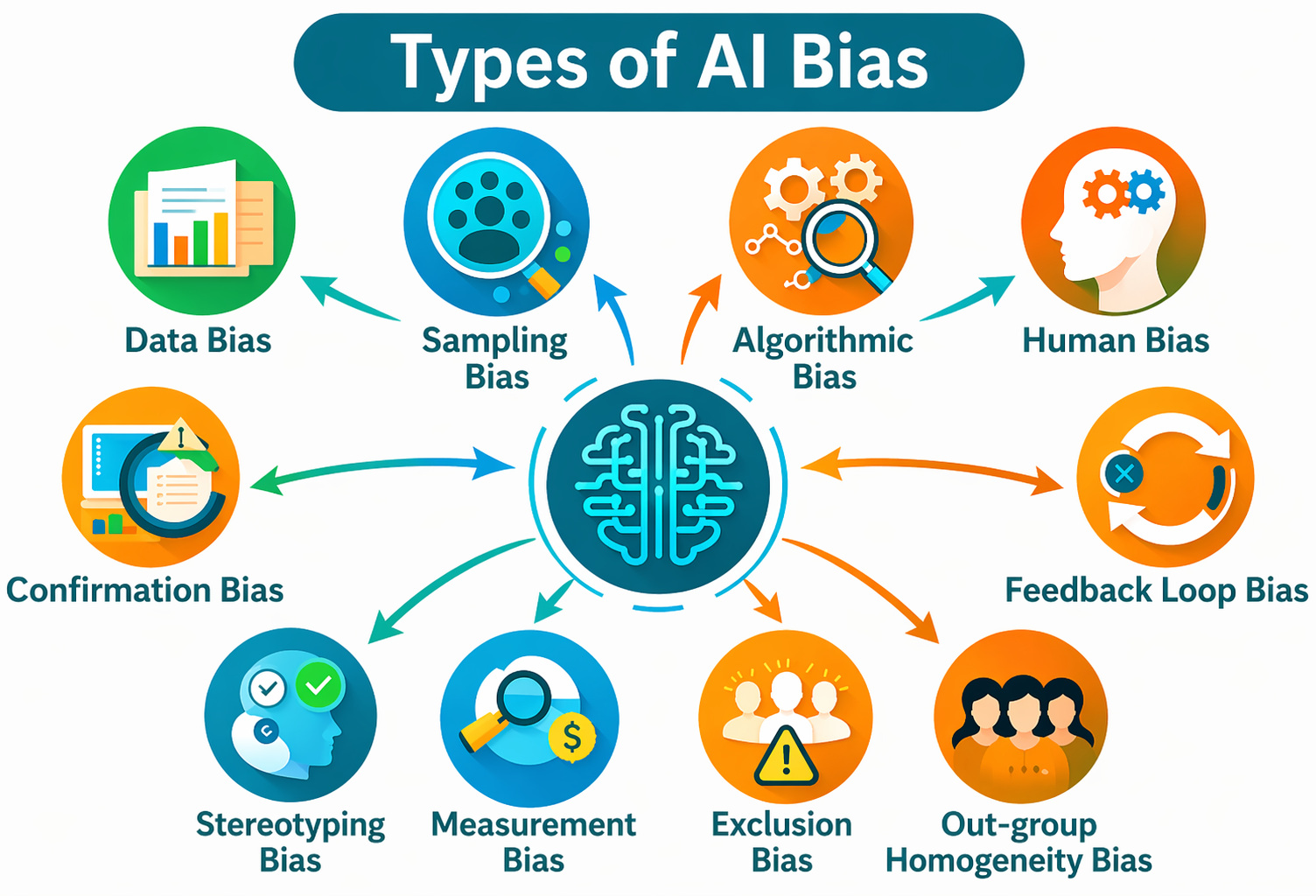

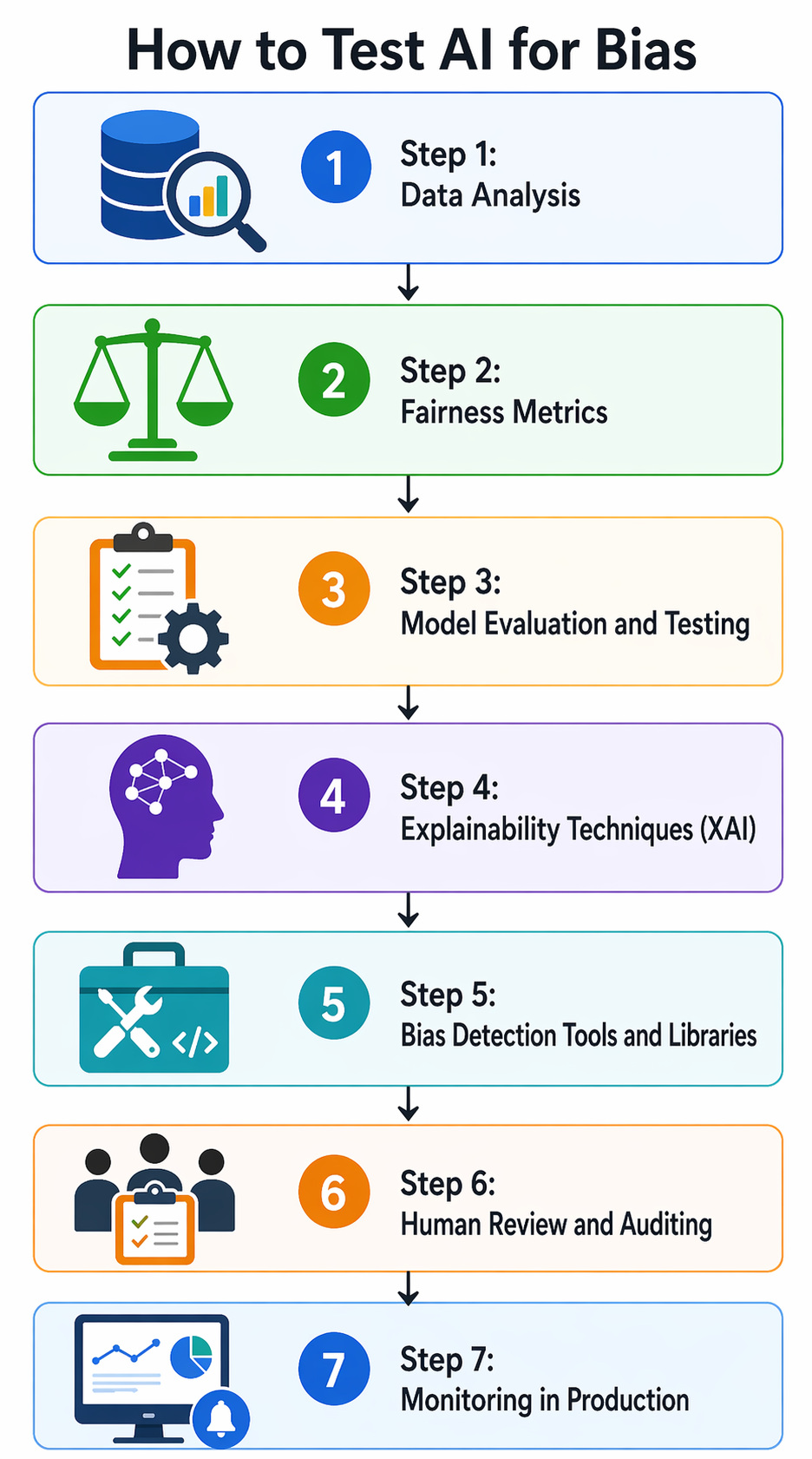

How to Test AI for Bias

Testing AI for bias is a structured, ongoing process that includes identifying, measuring, and mitigating unfair patterns in AI behavior. Here are the steps in the testing process:

Step 1: Data Analysis

Examine and analyze the representation of various demographic groups and categories within your training data. Identify missing data in patterns and proxy variables that may potentially introduce bias in the model.

Ensure the accuracy and consistency of data across groups.

Step 2: Fairness Metrics

- Demographic Parity: Assess if the proportion of positive outcomes is the same across all groups.

- Equalized Odds: Check whether the true-positive and false-positive rates are equal across groups.

- Equal Opportunity: Verify if the true positive rates are the same for all groups.

- Predictive Parity: Check if the positive predictive values are equal across groups.

- Disparate Impact: Assess whether the unprivileged group receives a positive outcome (at a rate below 80% of the rate for the privileged group).

Step 3: Model Evaluation and Testing

Perform performance disparity analysis to compare the model’s accuracy, precision, recall, and other performance metrics across different demographic groups. Significant differences in this can indicate bias.

Adversarial testing can also be performed in this step to test the model by providing carefully crafted inputs to expose potential biases.

Conduct subgroup analysis to evaluate the model’s performance on specific intersections of different demographic groups, and “What-if” analysis using tools to understand how changes in input features affect the model’s predictions.

Step 4: Explainability Techniques (XAI)

In this step, you identify which features have the greatest impact on the model’s predictions across groups using feature importance analysis. Highly influential features such as sensitive attributes or proxies could indicate bias.

Read: What is Explainable AI (XAI)? Key Techniques and Benefits.

Techniques such as SHAP (SHapley Additive exPlanations) and LIME (Local Interpretable Model-agnostic Explanations) have proven helpful.

Saliency maps, which highlight the regions of an image that a model focuses on when making a decision in computer vision, are used to reveal biases.

Step 5: Bias Detection Tools and Libraries

- Google’s What-If Tool: Tests how your AI behaves in different situations.

- IBM AI Fairness 360: A toolkit that checks for many kinds of bias.

- Microsoft Fairlearn: Helps evaluate and improve fairness in AI models.

- testRigor: Test the bias, negative/positive, and true/false statements in plain English.

Step 6: Human Review and Auditing

- Diverse Teams: Contains individuals from diverse backgrounds in the development and evaluation process. They can bring different perspectives on potential biases.

- Bias Audits: Independent audits of the AI system, including the data, model, and deployment process, are conducted to identify and assess potential biases.

- User Feedback: It is collected from users belonging to various demographic groups to identify any perceived unfairness or discriminatory outcomes.

Step 7: Monitoring in Production

Testing bias in AI is a continuous process that should be tracked and monitored to assess the model’s performance and fairness metrics in real-world deployment settings.

Data drift or evolving user behavior may cause bias to emerge or change over time. Hence, continuous monitoring is essential. You can set up alerts to notify developers in specific situations, for example, when fairness metrics fall below acceptable thresholds.

Techniques to Mitigate AI Bias

Once bias is detected, it must be addressed and mitigated. Common bias mitigation strategies include:

1. Pre-Processing (Data-Level Mitigation)

- Diverse and Representative Data: Ensure training data covers a wide range of perspectives, demographics, and scenarios.

- Data Augmentation/Resampling: Add or remove samples. This helps rebalance data distribution and prevent over- or underrepresentation of groups.

- Reweighting: Underrepresented groups are assigned higher weights and privileged groups are assigned lower weights to reduce skew.

- Anonymization: Remove proxy variables that can act as identifiers for protected attributes like race or gender.

2. In-Processing (Algorithm-Level Mitigation)

- Fairness Constraints: Learning algorithms are modified to explicitly include fairness metrics during training.

- Adversarial Debiasing: The system is trained using two neural networks, one to make predictions and another to detect bias, to produce fairer results.

- Ensemble Modeling: Multiple models are combined to average out individual model biases.

3. Post-Processing (Outcome-focused Mitigation)

- Threshold Adjustment: Classification thresholds are adjusted to ensure equitable outcomes across groups.

- Reject Option-based Classification: Classification decisions are adjusted for low-confidence predictions to favor disadvantaged groups.

4. Human Oversight

- Using diverse AI Teams to identify potential biases that might otherwise be overlooked.

- Regular audits and monitoring are conducted post-deployment for emerging biases.

- Explainable AI (XAI) is utilized for making AI decision-making processes interpretable.

5. Continuous Monitoring

Bias mitigation is not a one-time task, but is a continuous process. This technique tracks bias over time and updates models regularly to incorporate changes.

Challenges in Testing AI Bias

- Defining Fairness: It is challenging to define and measure fairness as different contexts require different metrics. There is no universally accepted definition of fairness.

- Trade-offs: In some cases, as fairness is improved, accuracy may be reduced.

- Hidden Biases: Detecting subtle, hidden biases is a challenge.

- Data Limitations: If demographic data is not labeled, testing can be a challenge.

- Dynamic Systems: AI systems evolve over time. New biases may be introduced in these cases.

The Future of Bias Testing in AI

As AI is adopted universally, bias testing is becoming a core part of responsible AI development.

- Automated bias detection systems

- Regulatory frameworks for AI fairness

- Standardized fairness benchmarks

- Integration of ethics into AI pipelines

Organizations are increasingly recognizing that fairness is no longer just an ethical consideration but is also essential for business success and user trust.

Conclusion

AI bias has emerged as a critical challenge in the development and deployment of artificial intelligence systems. It emerges from data, design, and human factors, and can lead to unfair or harmful outcomes if left unchecked.

Testing AI for bias involves a combination of data audits, fairness metrics, group-based evaluations, and advanced testing techniques. By implementing robust testing and mitigation techniques, developers can build AI systems that are not only accurate but also fair, inclusive, and trustworthy.

Ultimately, addressing AI bias is not just a technical responsibility but a societal obligation. As AI continues to shape the world, ensuring fairness must remain at the heart of innovation.

Frequently Asked Questions (FAQs)

- Can AI bias be completely eliminated?

No, AI bias cannot be entirely eliminated, but it can be significantly reduced through proper data handling, testing, and continuous monitoring.

- How often should AI systems be tested for bias?

AI systems should be tested regularly during development, before deployment, and continuously after deployment to ensure fairness over time.

- How does data quality affect AI bias?

Poor-quality or unrepresentative data increases the likelihood of bias, as the AI model learns patterns that do not reflect real-world diversity.

- What is bias mitigation in AI?

Bias mitigation involves techniques used to reduce unfairness in AI systems, such as balancing datasets, adjusting algorithms, or modifying outputs.

- What is the difference between bias and fairness in AI?

Bias refers to unfair outcomes, while fairness focuses on ensuring equitable treatment and minimizing discrimination across different groups.