If you’ve ever yelled at Google Maps because it told you to go on a non-existent road rather than giving a relevant rerouting, you already understand the problem of missing context!

Strange how smart AI seems at first glance. Sentences flow from chat programs as if written by humans. Code appears out of nowhere thanks to digital helpers. Voices respond without hesitation, almost familiar. Yet glitches emerge without warning. An answer fits facts but misses the point entirely. It forgets what you said two messages ago. A basic request, such as “change it”, becomes confusing all of a sudden.

The system heard your request. It processed the data. It followed the rules. And yet something slipped, since the context around you stayed invisible to it. When this happens, the issue usually isn’t the model. It’s the context. Most people notice right away when AI gets them, and when it doesn’t. What separates those experiences sits quietly beneath the surface: context.

If you’re building, buying, or relying on AI for real work, this concept isn’t academic—it’s practical. It shapes whether things move forward smoothly or break down at critical moments. The real test isn’t performance in controlled settings. It’s what happens when demands shift, details pile up, and expectations grow.

| Key Takeaways: |

|---|

|

AI Context – Meaning and Definition

AI context refers to the surrounding information that allows an artificial intelligence system to correctly interpret an input and produce a relevant, accurate output. Past conversations matter just as much as fresh input typed today. Clues from timing, location, or device settings feed into understanding, too. Goals set at the start of the conversation quietly guide each reply behind the scenes.

Put plainly, context comes from surroundings – without them, information stays ambiguous.

For example, someone saying “That didn’t work.” On its own, the phrase means almost nothing. Was it a password entry failing? A piece of software crashing? Maybe an ad strategy is falling flat? Humans resolve this automatically by recalling prior events. AI systems need those details handed to them directly or stored as context to make sense of it. Without that background, confusion sticks.

This changes how we see AI – context isn’t just helpful, it’s built into everything. Without it, nothing holds together.

Why Context Matters in Artificial Intelligence

Failing quietly is typical when context goes missing. It offers responses that appear correct yet miss the point. Polite errors instead of crashes.

It sounds good on paper, yet real use shows problems. Accuracy drops fast once steps multiply, talk flows back and forth, or details turn personal.

- Understanding intent instead of just keywords

- Maintaining continuity across conversations

- Adapting responses to a user’s role, goals, or environment

- Reducing hallucinations in generative AI

One limitation that teams often underestimate is that AI does not “remember” unless you design for it. Lose the context, and suddenly the model is just predicting blindly from probabilities.

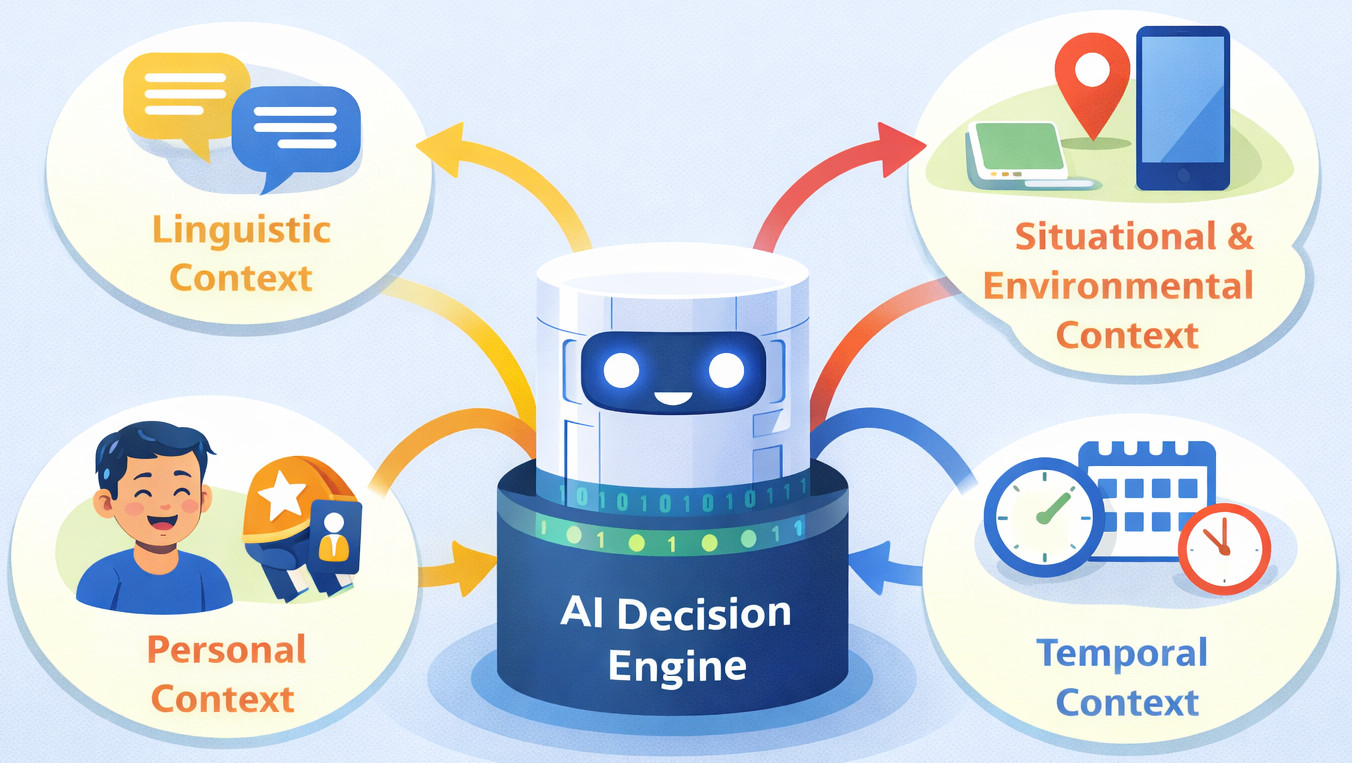

Types of Contexts in AI Systems

Linguistic Context in AI

Linguistic context shapes how we understand a phrase: previous messages, sentence structure, tone, and what is hidden between the lines. These contexts matter a lot when AI systems try to make sense of ambiguous parts. Models watch closely for clues in the flow.

For example: A message like “Can you rewrite this?” leaves the model guessing. What does “this” point to: an email, a paragraph, or a code snippet shared earlier? Without clarity, response falters. Context slips through gaps. The system waits, unsure where to apply effort. Missing pieces stall progress. Clarity shapes outcome.

Situational and Environmental Context

Situational context includes location, device, time, and current activity. Later means nothing if the system does not know when you are talking about. Where someone is, what they’re using, the hour of day, plus what they happen to be doing – all shape that meaning.

For example: A voice assistant needs to figure out the situation when someone says, “turn it down.” Is music running? An active call? Or is it a TV?

Yes, it sounds simple, yet pulling live data into AI systems turns out to be a massive engineering headache.

User and Personal Context

Personal context includes user preferences, prior behavior, role, and expertise level. A developer and a CEO asking the same question often need very different answers. A difference in expected response shows what each person values under the surface.

For example: When both ask, “How do we improve system performance?”, one expects architectural trade-offs while the other expects business impact.

One limitation teams often underestimate is how quickly personal context becomes outdated. Preferences change, roles shift, and stale context can be worse than no context at all.

Temporal Context

Besides knowing what’s going on right now, an AI uses time clues to piece together earlier events plus predict later ones. What came first shapes how it interprets present moments, along with what follows after.

For example: A single word like “undo” means the system picks just the last move, never reaching back further. What happened before doesn’t matter when someone asks to reverse it now. The latest step is the only one in play once those words come up. Jumping to old actions would miss what they actually want undone.

In real projects, this usually breaks when systems treat each interaction as stateless, forcing users to repeat themselves.

How AI Understands Context

As of now, many artificial intelligence tools, particularly LLMs, depend on something called a context window. A context window is the amount of text (measured in tokens) the model can “see” at once.

Memory isn’t kept long-term in the model unless it is set up that way. What comes next gets predicted using patterns found inside the current window.

Here’s when things get mixed up. Users think AI grasps context like people, like it just gets what words mean together. Not true at all. It recognizes statistical relationships between pieces of information placed in front of it.

Because of this, the way you share context shapes understanding as much as the details themselves.

AI Context vs Memory vs Knowledge

- Context: Information available to the model right now

- Memory: Information intentionally stored and reintroduced later

- Knowledge: What the model learned during training

This might seem straightforward at first glance, yet real-world team behavior often ignores such lines. Models get tasked with recalling details they’ve neither stored nor supplied with again.

| Concept | What It Is | Common Misunderstanding |

|---|---|---|

| Context | Info available now | “The AI remembers this” |

| Memory | Stored + reintroduced info | Assumed automatic |

| Knowledge | Training data | Assumed up-to-date |

Most times in real projects, things fall apart if someone comes back after a few days looking for the same flow, only to find the AI system remembers nothing.

Context Windows and Their Limits

Larger context windows allow models to process lengthier conversations and documents. But they are not a silver bullet.

Here’s something teams tend to overlook: cost increases when systems scale. Bigger memory spaces slow things down, chew through more resources, and eat up budget. Performance dips happen alongside higher bills.

Also, more context sometimes makes things worse instead of helping. When the added context has nothing to do with the task, the system gets mixed up; performance takes a hit because clarity fades.

This is why many teams are moving toward context engineering rather than just bigger prompts.

Context Engineering in Real Systems

Context engineering is the practice of deliberately selecting, structuring, and delivering the right information to an AI system at the right time. Right contexts land better when spaced like footsteps, not dumped as noise.

- Retrieving relevant documents (RAG)

- Injecting system-level instructions

- Mind keeps hold of recent moments alongside those far back in time

- Filtering irrelevant history

In theory, it seems fine. Yet real-world results tell a different story. Retrieval quality is often the weakest link. If the wrong documents are input, the AI confidently produces the wrong answer.

Why Context Is the Key to Better Generative AI

Generative AI failures are rarely random. The root lies in models missing grounding; without them, outputs drift. Lacking enough context, models fill gaps to generate answers that seem reasonable. That’s where hallucinations come up.

Most times in actual projects, things fall apart if people trust only the model without combining it with external research and clear constraints. Context doesn’t make AI smarter. It makes AI less wrong.

Symptoms and How to Decide If You Have a Context Problem

- Users repeat themselves often

- Answers are technically correct but practically useless

- The AI model contradicts itself. Its answers clash without warning. What was true before now seems false.

- Personalization feels shallow or random

| Symptom | Likely Cause |

|---|---|

| Users repeat themselves | Context loss |

| Answers feel generic | Missing personal context |

| Contradictions | Poor temporal context |

| Confident wrong answers | Bad retrieval context |

When these things feel familiar, fixing them usually isn’t about finding a smarter model. Clarity comes from a better context.

Risks of Over-Contextualization

Over-contextualization happens when AI systems are fed excessive or poorly filtered information. Rather than sharpening results, it usually means higher expenses, hallucinations, sluggish replies, because the system drowns in noise. Sorting signal from clutter becomes harder, so performance takes a hit even though more details were added on purpose.

There are also material risk considerations. Holding on to user details too long becomes a problem when systems lack strong oversight. Privacy slips away, rules get broken, and information spreads without guardrails in place.

Finally, outdated context creates silent failure. When what users want shifts, alongside company policies or marketplace realities, AI systems anchored to stale context continue operating on assumptions that are no longer valid.

Effective AI systems don’t accumulate context indiscriminately. They select, refresh, and discard context deliberately. This balances relevance, risk, and performance.

Conclusion

Users often run up against an unrelenting wall when trying to obtain a relevant solution. They sense that prompting alone isn’t enough.

- Build more reliable AI products

- Set realistic expectations

- Diagnose failures correctly

- Decide when AI is actually ready for production

AI context is not a feature you tack on at the end. It is the difference between a demo that impresses and a system that actually works.

Related Reads

- What is Explainable AI (XAI)? Key Techniques and Benefits

- What Is AIOps? A Complete Guide to Artificial Intelligence for IT Operations

- What is Adversarial Testing of AI?

- What Is AI Evaluation? Measuring the Performance and Reliability of Artificial Intelligence Systems

- Free AI Testing Tools

- AI Agents in Software Testing

FAQs

What does “context” mean in AI systems?

In AI, context refers to the surrounding information that helps a system interpret an input correctly. It includes historical conversation, user intent, timing, location, and relevant background data. Without context, AI responses may be technically correct but practically useless.

Is more context always better for AI?

No. Over-contextualization can slow systems down, increase costs, introduce privacy risks, and even reduce accuracy. Effective AI systems curate context carefully, selecting only what’s relevant and discarding stale or unnecessary information.

How do RAG and context engineering improve AI responses?

Retrieval-Augmented Generation (RAG) and context engineering work by supplying the AI with the right information at the right moment, instead of relying on the model to guess. RAG retrieves relevant documents or data on demand, while context engineering controls what gets included, excluded, or refreshed. Together, they reduce hallucinations, improve accuracy, and make AI systems more reliable in real-world use.